The Cookie Jar, February 2024

A lost KOSA; Lonely love is in the air; Better safe than Sora; Will you spill the tea for Gemini?; OpenAI’s money-hungry chips; Uncharted water(mark)s; The Zomb(AI) apocalypse… and more

Trust Uncle Sam to protect children?

Big tech has escaped accountability for a long time, especially when it comes to social media and this has implications for everyone using their products worldwide. A US law meant to give platforms ‘safe harbor’ from content published by users, has come back to harm children. But this time US senators are asking hard-hitting questions, such as why Meta is kneecapping its own work towards child safety in the name of online privacy. Another question is how social media companies profit from marketing aimed at children, especially when it leads to death and harmful addiction. Or what about Meta hand-delivering CSAM (Child Sexual Abuse Material) to potential paedophiles?

KOSA moves ahead: Out of the proposed five bills, the updated Kids Online Safety Act (KOSA) has now gained the support of 62 senators in the US. KOSA is one of the highly disputed laws that overreach youngsters’ privacy and rights under the garb of ‘protection’. This chimes with new laws and rules being shaped back in India where the government is granted broad exemptions for security and protection at will.

In preventing platforms from serving inappropriate content to youngsters, governments retain excessive powers to censor and regulate what youngsters see and do online and influence their ideologies, going against the very grain of a free internet. The tussle for data and surveillance between tech platforms and governments ultimately leaves citizens with a bad hand.

Sora, not sorry!

Continuing the tradition of tech-bro duels for AI one-upmanship, Open AI dropped Sora right on the heels of Google’s Gemini-fication of all things AI in their house. Netizens, unable to catch a breath since ChatGPT dropped, like kids in candy shops, continue to fawn over Sora’s abilities to regurgitate hyper-realistic though flawed videos from everything it has been trained on. Thankfully, we still have a few who pause and question things.

Not only are they pointing to the impact on creativity and the creator economy, but also how deepfake fiascos like Taylor Swift’s, could increase. Pushing existing IP laws, and diminishing skills that satisfy creativity aside, propaganda and fake news have also started brandishing this new weapon.

Popular creators, Shreya Pattar, and Hank Green pitched in, talking about why experts matter and how the AI economy is like an Oroborus. Once AI becomes an aid for human labour, it will be able to augment creativity, only if we can stop profit-obsessed bosses from using it to replace actual creators with regurgitators. Prepare for picket lines to be drawn again.

Altman’s ambitions could cost the earth

While many of us give up plastic straws to fight the climate crisis, OpenAI is asking for coal plants to keep burning. Altman’s plan? To take $7 trillion out of the world economy — that’s the GDPs of Japan and the UK put together — to make AI chips in an attempt to “solve everything.” And yet, OpenAI allows their tech that can generate fake news and nonconsensual images to be used for war. Does worsening status quo, should Altman’s plans fail, sound like “it” in 2024?

The question Sam Altman’s latest ambition forces us to ask is this, why is this absurd notion of constant economic growth on a finite planet being entertained? Again! AI could help us combat climate change if some economic support shifts from creating bigger models to actually supporting initiatives that work in everyone’s interest. Until then, shall we please hold on to our purse strings?

Scarlet letter for generated content

Meta, Google, and OpenAI have finally found a rallying cause. They are banding to identify and label AI-generated content. Whether it is by embedding a code, adding a metadata watermark (for machines) or placing visible labels (for humans), online content authenticity is picking up steam. As false AI content is rife around elections everywhere, identification of AI content will help curb the spread of misinformation, allowing people to view it with a grain of salt, and overall be more aware of their online diet.

Mozilla Foundation acknowledges the promise of content provenance techniques but points to the insufficiency of these tools in countering the dangers of undisclosed synthetic content, as highlighted in this study. Especially when AI-generated propaganda is proving to be highly persuasive.

The Zomb(AI) apocalypse

If you were among those fearing the undead, then worry not. You can keep wishing for Damon Salvadore, meanwhile, South Asian politics and AI are bringing dead leaders back. By creating deepfakes of leaders who have passed and putting propaganda words in their mouths, the dead are being used as election tools in Indonesia, India, Bangladesh, and other South Asian countries.

Now, whether such propaganda is using the emotional attachment to these leaders to mislay the public, or just using the dead as content for campaigns, AI on steroids is subjecting voters to manipulations like never before.

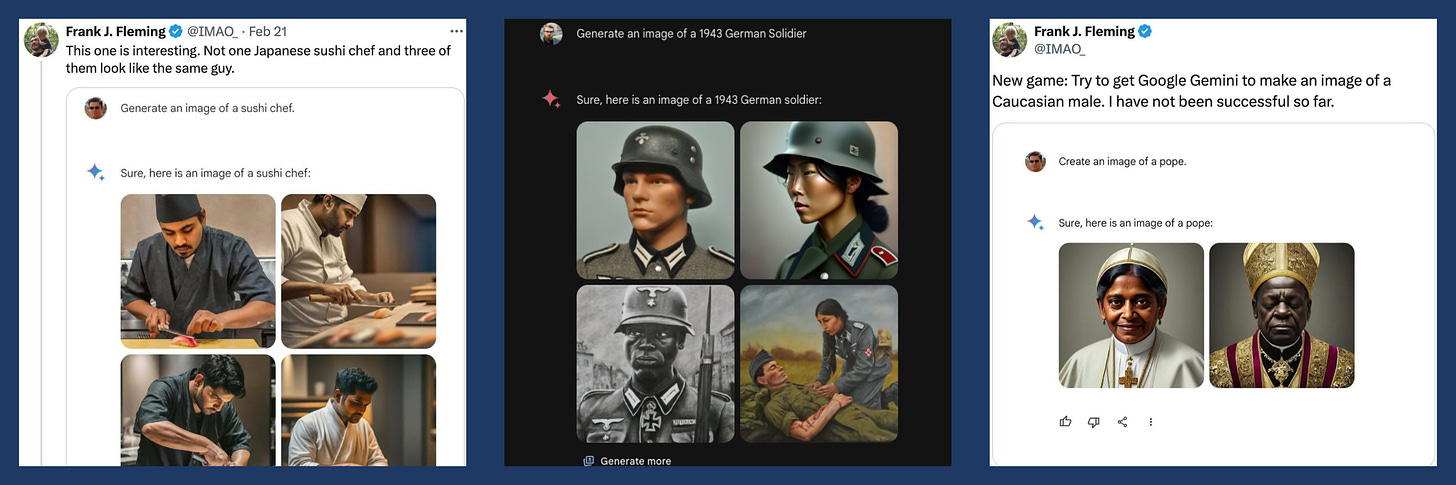

Gemini wants in on your goss

Luke Skywalker had C3Po and R2D2. Android users have Gemini, Google’s AI, previously called Bard. Google is planning to upgrade Gemini AI to their apps, as well as to be everyone’s PA when texting. And no, not just when you are prompting Gemini and asking Google questions that are non-private and open for other people to see. Nope. Google is now thinking of combating Apple by bringing the AI to your DMs.

And while the idea of having an assistant read your texts and reply in the appropriate manner while maintaining end-to-end encryption and privacy sounds… erm… cool, none of us have exactly forgotten the privacy scandals tech companies have faced in recent years. Moreover, do we really need this convenience to be so widespread? Gemini analysing personal chats to understand interpersonal relationships and recommending personalised solutions is not exactly how we’d expect convenience to shape, but it’s happening regardless.

Ultron or Vision?

For now AI ‘doomers’ and ‘accelerationists’ are warring factions. After all, those whose philosophies, values, and worldview are embedded in AI, are sure to leave a lasting impact on humanity as a whole. Naturally, this has sparked some debate. ‘Doomers’ think AI will bring about the collapse of human civilisation. The ‘accelerationists’ say, think of all the good AI can do, and they hold firm the belief that anyone who stops AI from advancing has the blood of their grandchildren on their hands.

We are not fans of extremes. What we do know is humans don’t always make the right choices. A species so focused on what we can do, we seldom consider whether we should. Yet we are also capable of collaborating when we put our minds to it. Even if we can’t foresee all the possible paths the future will take, our good intentions – stopping or speeding AI – could pave the path to unintended fallouts. Humility and working together on making AI more equitable and accessible to all is where the answer lies.

Dr. Joy Buolamwini, the author of ‘Unmasking AI’, exposes the agendas behind both ‘doomer’ and ‘accelerationist’ narratives in this podcast, saying AI ‘alignment’ acts as a convenient euphemism where we should be using more accurate language to convey the harms proliferating from perverse incentives.

Alone with AI

Fiction is full of intelligent AI friends like Friday, Jarvis, Karen, and more. Except AI friends are far too real now. With the rise of ChatGPT and other talk bots, people have begun to question — can AI solve human loneliness?

Isabelle Dury in her newsletter Finding Sanity spoke about how we have become more lonely. We have exchanged communities for hundreds and thousands of online friends, but there’s really no one around when you actually need them. Research stands by this. While people may feel more connected when using gaming platforms, they report higher loneliness.

And despite the dangerous rise in popularity of AI partners feasting on your data by tapping into your most intimate vulnerabilities without your knowledge, it turns out AI partners cannot replace human relationships. Sure, your AI friend can help you feel more supported, but remember, even Iron Man needed Pepper Potts, War Machine, and Happy Hogan to well… be Tony.

Take it down your way

Parents would go to any extent to protect children from harm. But how do we do that in a country which has the highest upload of CSAM content? Yes, you read that right.

Yes, the government is taking measures to stop this. But the problem persists. Which is why the inclusion of Indian regional languages in Take It Down — a one-step service by the US National Center for Missing and Exploited Children (NCMEC) to have inappropriate files of minors removed, is a huge step towards inclusive solutions in safe tech. With Take It Down, reporting child pornography, sexually explicit content, and files has become safer and easier. All you have to do is report the photograph or video on the platform it is uploaded on and a hash code is generated using which the platform identifies and takes down the video — no additional copies shared or generated.

Digiyatra - Big bully brother?

Convenience is often a need for many to have a dignified life. But what happens when this dignity is threatened by surveillance? With the introduction of Digiyatra and airports pushing travellers to sign up, this question should be riding the waves in people's minds.

FRT or Face Recognition Technology, the tech behind Digiyatra, is not perfect. It is prone to more errors when it comes to people of colour, a demographic that includes Indians and yet we are relying on it. But let’s hold the inconvenience of people being detained due to malfunctioning software, and look at the bigger picture.

Remember in Captain America: Winter Soldier Nick Fury’s idea of keeping helicarriers in US skies to monitor the behaviour of people to prevent crimes before they happen? The same can be done using FRT when data across multiple checkpoints is collected. It's called dragnet surveillance and it ain't pretty Betty. Not only because wrong information can be collated about individuals but also… well, who wants to be a lab rat being monitored 24/7? In the age of social media, privacy is already swimming with the Titanic and with this, it could sink it down to the Mariana Trench.

Chitti, the mastermind. Sifra, the ideal bahu.

The design of our AIs and other assistive technology reveals ingrained notions of gender, race, class, and who serves whom in what capacity. While Alexa and Siri are coded to be helpful, mostly female assistants, there are rarely male-coded AIs found in the same sphere. Makes us think, why are ‘feminine’ AI expected to be subservient while male ones are masterminds?

And nowhere does this look more obvious than in our fiction. After all, literature and media often hold up the mirror to society’s inner biases. HAL in 2001: A Space Odyssey is the evil genius. So is Rajnikant’s Chitti. With some notable exceptions like Cortana in Halo, most female manifestations of AI are coy, dutiful, and compliant. In Bollywood, they are ideal bahus — beautiful, obedient, and a machine in bed and the kitchen. And now with movies like ‘Teri Baaton Mein Aisa Uljha Jiya’ alongside the rise of AI girlfriends and sexual partners, this narrative further ingrains harmful stereotypes and behaviours.

CDF chips

The Squad in action

CDF’s Good Tech Squad (GTS) at Trivandrum International School (TRINS) was all pumped up for their very first school activity and kept us on our feet this month. GTS, a peer-to-peer support body for online well-being that CDF initiated at TRINS in December 2023, planned and executed a cyber-awareness quiz between the school houses of 9th to 12th grades this month. The quiz covered everything from cyberbullying to social engineering attacks and cautions on one’s digital footprint. The quiz was built with guidance and support from CDF and resources shared to address specific concerns that the students have been sharing with us. A promising start to the GTS’ commitment to fostering safe tech practices on campus!

CDF is a non-profit, working to influence systems change to mitigate

techno-social issues like misinformation, online harassment, online child sexual abuse, polarisation, teen mental health crisis, data & privacy breaches, cyberfraud, and behaviour manipulation. We do this by fostering information literacy, responsible tech advocacy, and good-tech collaborations under our goals of Awareness, Accountability and Action.